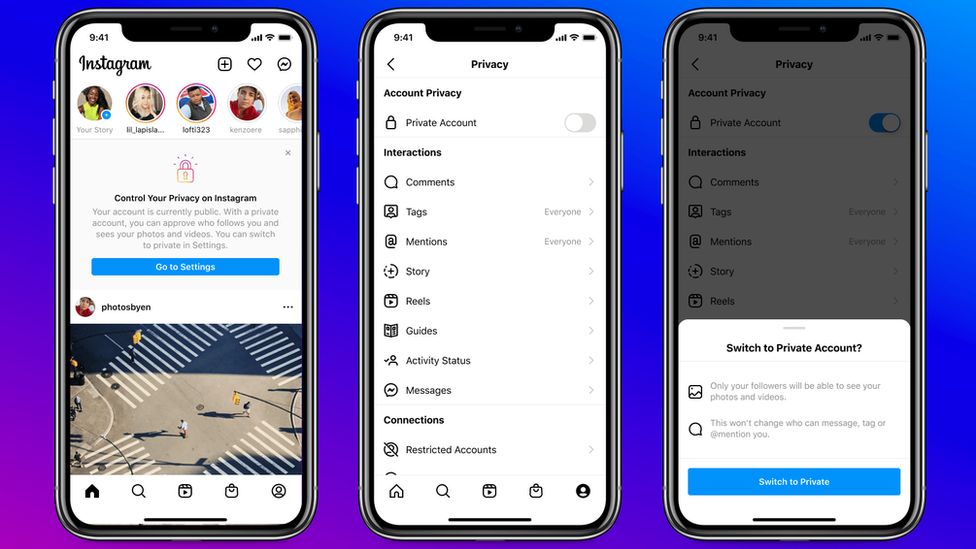

Instagram has made new under-16s’ accounts private by default so only approved followers can see posts and “like” or comment.

Tests showed only one in five opted for a public account when the private setting was the default, it said.

And existing account holders would be sent a notification “highlighting the benefits” of switching to private.

But Instagram also said it was pushing ahead with new apps for under-13s, despite a backlash from some groups.

“The reality is that they are already online and, with no foolproof way to stop people from misrepresenting their age, we want to build experiences designed specifically for them, managed by parents and guardians,” parent company Facebook said.

Although it was also developing artificial-intelligence systems to find and remove under-age accounts.

The upcoming Online Safety Bill puts the onus on technology giants to ensure sufficient safeguards to prevent children accessing potentially harmful content.

And Instagram has also:

- come under fire from children’s charities for failing to remove harmful content

- been investigated for its use of children’s data in Europe

In response, it has developed a range of child-protection measures.

In March, it announced older users would be able to message teenagers who already followed them only.

But the system relies on the age listed in the account – something younger users may lie about to avoid such restrictions.

Instagram said its latest updates were about “striking the right balance”.

Another of its changes – preventing accounts showing “potentially suspicious behaviour” such as recently having been blocked from messaging or following children’s accounts – would make them “difficult to find for certain adults”, Instagram said.

Meanwhile, “a more precautionary approach” will see advertisers able to target children based on only age, gender, and location, rather than interests and web-browsing habits.

And while defending targeted ads in general and the optout features it has for some types, Instagram said: “We have heard from youth advocates that young people may not be well equipped to make these decisions.”

www.bbc.co.uk